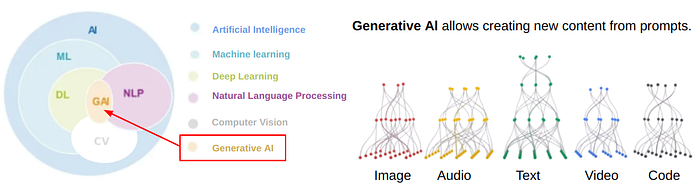

Generative AI is the state of the art of machine learning and has recently seen significant advancements with the emergence of AI ChatBots with high performance.

Let’s discover its history, from simple textual machine learning models specialized to universal large language models at a vast scale:

- 💬 AI ChatBots

- 🔥 From NLP to Multimodal GAI ChatBots based on LLM

- 🧪LLMs Timeline: Closed-source vs. Open-source

- 🎯 From Specialized Model to Generalized ML Model

- 📈 Scaling Laws & Performance

- 🏁 Technological and Economic Race

- 🚀 Go Further with Limited & Augmented LLMs

💬 AI ChatBots

Building generalist AI chatbots is the most complex challenge for a technology that simulates human intelligence. It is the base of the artificial human assistant. They are experiencing dazzling success with the release of ChatGPT, a new step that has been overcome after Google Home and Alexa by AWS. The technical component behind these products has disrupted and improved the ecosystem considerably, although not perfect yet (perhaps it will never be).

The interest in chatBots followed the one for deep learning from 2010. Before, it was reserved for a restrained audience. Still, it exploded in November 2022 with the release of chatGPT open to the general public with a ramp-up of 100M Monthly Active Users (MAUs) after two months, a worldwide record (4.5x faster than TikTok) and 1.6B MAUs now. At the same time, machine learning and deep learning trends have continued growing in the background for ten years.

To have an order of magnitude, the human brain has, on average, 100 billion neurons and 100 trillion synapses. The estimated resources used for chatGPT+ (paying version of chatGPT) use deep learning models based on GPT-4 with an estimated model size close to these values (digits kept private by OpenAI). But a human neuron/synapse is much more powerful than a deep learning neuron/synapse. The gap is still important, above on reasoning tasks on unknown problems requiring many resources.

🔥From NLP to Multimodal GAI ChatBots based on LLM

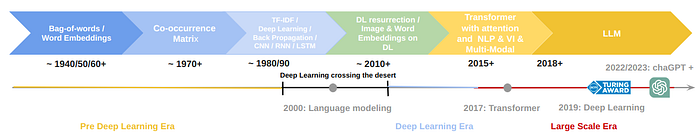

Thanks to Large Language Models (LLMs) working with Generative AI, chatBots continue getting closer to the objective of simulating human intelligence. The NLP AI models have progressively migrated from simple parsing to complex processing on the language structure, from shallow learning to deep learning, from a single-language vocabulary of words to a vocabulary of tokens with multi-language embeddings, from an RNN (LSTM) to the Transformer with multi-head attention layers approach.

Finally, by mixing other types of data (like images, audio, …), NLP models became multi-modal generative models where varied instructions (in input) give varied results (in output).

👀 Read more

Read the rest of the article …

Comments